With much of application landscapes now built on and built for the Cloud, natively, integration middleware too needs to embrace Cloud native principles and provide integration across applications on the Cloud and also on premise. This paper looks at how Cloud native technologies such as FaaS (Functions as a Service), Serverless architectures and Containers, digitally enable enterprises to provide a multi-channel, hybrid integration solution.

Cloud native adoption is reaching a critical mass

Applications in enterprises are moving from being on-premise-centric to Cloud-oriented. Driven by digital transformation goals, the initial Cloud adoption was largely utilizing IaaS (Integration as a Service) as it naturally mapped to on-premise data centers. Existing integration middleware were rehosted on IaaS to leverage Cloud benefits. However, IaaS is not enough to get the real benefits of the Cloud as there are limits to elasticity, flexibility and variability by just rehosting software. It also means that the enterprise has to do its own maintenance and upgrades on the software. So, how do enterprises get to think like a Cloud company? Enterprises are now beginning to adopt a Cloud-first strategy, building for the Cloud as a first-class citizen. They are containerizing the applications and adopting Serverless architectures.

What does it mean for integration?

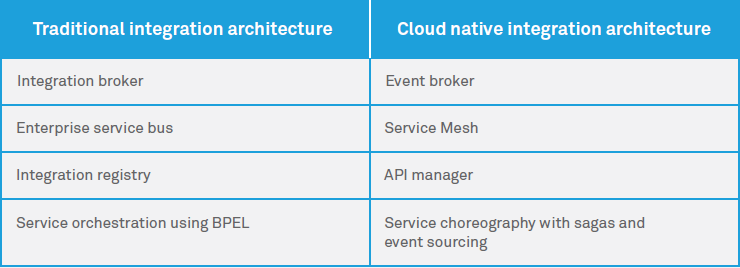

With new architectures such as Serverless, Functions as a Service (FaaS), Container-based applications and Platforms as a Service (PaaS), come new challenges for integration. The lines between A2A and B2B integration are increasingly getting blurred with enterprise applications moving to the Cloud or organizations adopting Software as a Service (SaaS). Applications are becoming smarter and use APIs to expose their data. The traditional application adapters that spoke to specific ERPs or other applications are no longer viable. The new integration middleware has to be lean and lightweight. It should be smart enough to exchange data within and across the enterprise not only with applications but with channels such as devices and sensors, and smart interactions such as Chatbots, voice apps and highly personalized interfaces in real-time. These channels interacting with Cloud native services are typically designed as microservices. Moreover, with microservices come challenges such as the volume of integration points, orchestration and events. A smart integration middleware can solve these problems through the use of API Management tools, Service Meshes and Event Buses. These can reside on Serverless architectures and containers, which are fast becoming the norm that enables continuous delivery. The turnaround time for building integrations has to be reduced to hours from weeks as the endpoints have become smarter. With Agile, the releases are small and frequent rather than large and few. The key is to enable to go to market faster.

Service Mesh, a Cloud native integration middleware, enables intelligent integration capabilities among services.

Emerging Cloud native integration landscape

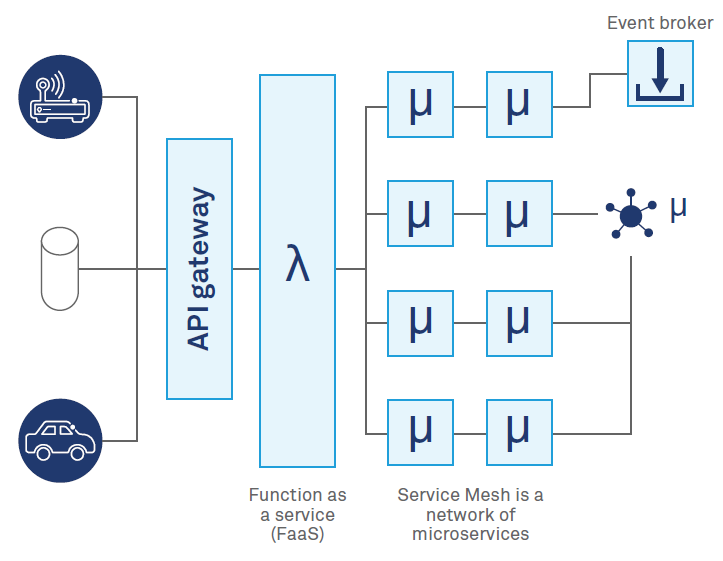

APIs are establishing themselves as the mechanism of choice for multi-channel integration. Microservices which are the new building blocks of applications require new types of orchestration and integration mechanisms. The ESB of yesteryears is giving way to API Gateways that enables access to these Microservices, enabling integration between API clients and applications within and outside the enterprise.

Behind the API gateway, Service Mesh enables intelligent integration capabilities among services.

Service Mesh provides service discovery, routes requests to Cloud native service instances and handles failures. Linkerd, Istio, Envoy and Consul are some of the popular Service Meshes available today.

Real-time insights need lightweight event brokers such as Kafka and are replacing all-encompassing integration middleware. Event sourcing and Saga pattern are emerging as the dominant style for orchestration. Lightweight integration middleware led by a new wave of micro-integration stacks such as Apache Camel, Kafka and RabbitMQ are emerging. These stacks leverage container deployment models such as OSGi-based Apache Karaf.

Functions as a Service (FaaS) is today largely represented by AWS Lambda, with Azure Functions and Google Cloud Functions following close behind. The idea that the usage of server infrastructure is tied to the actual time that a piece of code gets executed is exciting. In FaaS, the functions get executed when an event such as receiving an email, upload of a file, arrival of a message or a custom defined event occurs. It can also get executed on a defined schedule. Integration driven by events or a schedule has been in place for years now where interfaces are started on the arrival of a message or the presence of a file in a directory or nightly. Applying the concept to FaaS, integration between Cloud native applications can be performed by sending event messages or define schedules to trigger functions that can then get or send data and process business logic. The smart endpoints in Martin Fowler’s statement are now represented by the functions that get executed. These functions and Cloud native services would need orchestration on the Cloud. Step Functions from AWS steps in to orchestrate microservices built on AWS Lambda. Netflix Conductor similarly orchestrates tasks that represent microservices using workflows.

Fig. 1: Multi-channel integration in a Cloud native landscape

Fig. 2: Traditional vs. Cloud native integration architecture

Way forward for enterprises

Integration is everywhere. With the need for a dedicated integration middleware getting diluted, integration is no longer the visible behemoth. It has become pervasive through APIs and microservices. It is no longer about deploying integration middleware or using iPaaS. It is time to think beyond hybrid Cloud integration by defining a cohesive integration strategy that can bring together services and build a truly agile and digital organization. A strategy that not only facilitates the exchange of information between channels, but also helps adopt and integrate Cloud native applications through providing a roadmap for integrations to become Cloud native thus enabling an organization to be digital beyond boundaries.

Aravind Ajad Yarra - Distinguished Member of Technical Staff, Wipro.

Aravind is a chief architect and architecture practice leader focusing on emerging technologies and digital architectures. With over 22 years’ experience in the IT services industry, Aravind helps clients adopt emerging technologies to build smart applications leveraging Cloud Computing and Mobility. In his previous roles, he worked as a solution architect for several complex transformational programs across banking, capital markets and insurance verticals.

Danesh Hussain Zaki - Lead Architect - Integration Services, Wipro.

Danesh has over 20 years of experience in consulting, architecture, implementation and development. His core expertise is on Enterprise Integration covering API Management, Open Source Middleware, Integration Platforms as a Service (iPaaS) and SOA. In his current role, Danesh is involved in defining solution architecture, strategic marketing and handling key deals across verticals.