The drive to transform quickly-to be nimble-is not just for playtime. The extreme pressures that the upstream oil and gas industry has experienced in recent years has driven companies to seek nimbleness in their enterprises. Price volatility, exponential data growth, and major shifts in the composition of the workforce are driving companies to be lean, efficient, responsive and proactive in the marketplace. The 4th Industrial Revolution1 is also creating digital disruptions that include artificial intelligence, robotics, the Internet of Things, and virtual/augmented reality. These changes are ushering in technology and data opportunities at an unprecedented pace.

Enter the cloud, offering companies a foundation for flexibility in a rapidly evolving industry environment. It is the LEGO base for the upstream oil and gas industry-the platform upon which companies can extract amazing value from their businesses and then quickly transform as the world changes.

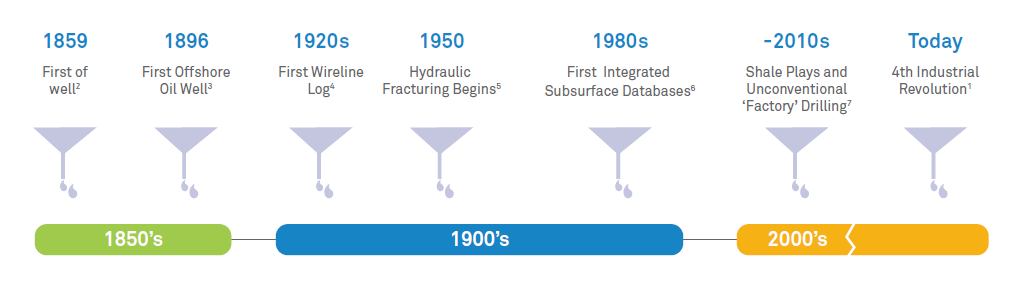

The industry has a history of courageous first moves: The first oil well in 18592, offshore drilling in the late 1800s3, the introduction of wireline logs in the 1920s4, advanced subsurface software applications in the 1980s6, and the past decade’s rapidly improving unconventional recovery methods7– the relationship between global supply and demand has required continuous step change.

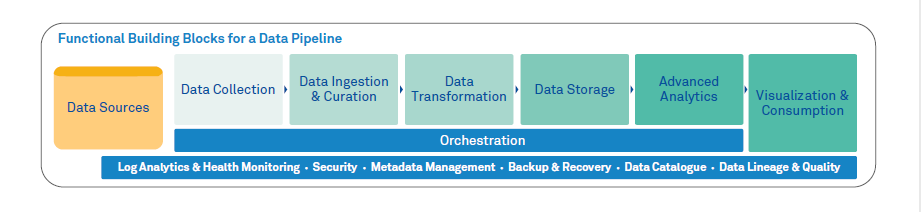

As the industry moves ahead, cloud adoption is essential to take advantage of the next wave of innovation. This journey starts with migrating to the cloud with ongoing operations driven by business opportunity, integrated workflows, personas, and data. The goal is to orchestrate smooth operation after cloud adoption and positions a company to become more integrated and automated.

Data is key to a cloud-nimble approach. Many upstream businesses are fortunate to have access to rich historical data assets. For example, in the 1970s, a company in the North Sea began producing a reservoir that was estimated to have 800 million barrels of recoverable reserves.8 By the late 1990s, the company had accrued large volumes of data from decades of drilling wells and producing the field in the form of wireline logs, mud logs, core samples and reprocessed seismic. This allowed them to conduct more detailed analysis, resulting in identifying an estimated additional 160 million barrels of recoverable reserves. How did they find these reserves? From the additional knowledge of the reservoir and the nearby pockets and traps that were detected in the subsequent and ongoing forensic examination of all the available historical and contemporary data.

This is a great example of infrastructure-led exploration (ILX) and of the rich pools of information and value that companies can detect and derive from their data stores. Cloud technologies offer a robust backdrop-a foundation, if you will-for enabling upstream asset teams to exploit rich data assets at a scale and pace previously untenable.

Drivers for big thinking

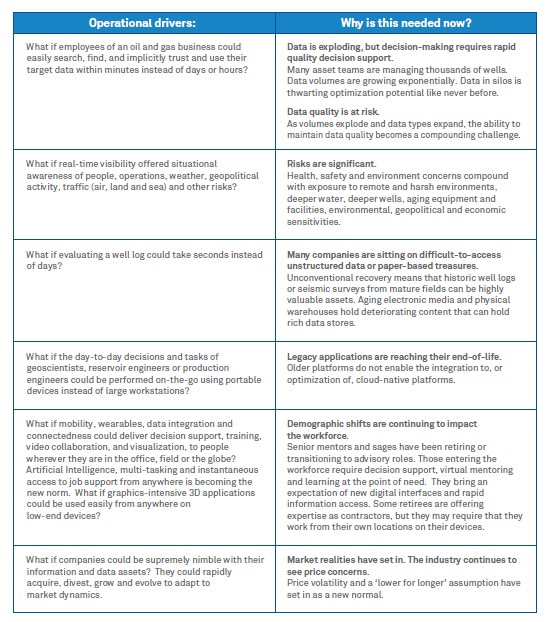

How can these foundational capabilities help companies respond to the complex challenges they experience today in areas like information access, logistics, situational awareness, aging 3 assets, price volatility, and safety? Companies must think big to find the right solutions. Here are some drivers that can help companies envision the future they will build:

Being cloud-nimble

One of the biggest questions that confronts exploration and production companies is how to proceed with cloud adoption. Traditionally, enterprise migrations are viewed as an IT-driven ‘lift and shift’ exercise, perhaps by moving one application suite at a time. The company adopts an approach and the business organizations and processes adapt accordingly. However, there is no one-size-fits-all approach to moving and managing a business through a cloud migration. This can disrupt cross-functional workflows and user experience and generally cause havoc.

Common concerns about cloud adoption include data security, the ability to handle large data volumes, the protection of intellectual property, network latency, the complexities of cloud agreements and whether legacy software and technologies will operate on a new platform. Even when these concerns are addressed satisfactorily, the oil and gas industry itself generates further complexity. There can be jurisdictional requirements mandating that data reside in a certain country. Or applications that are standard in an enterprise may have local instances in geographic assets running different versions or with local extensions or customizations. Or specialized systems may have been adopted in some local assets and not others.

How does a company beset with these and other challenges become cloud-nimble?

Two guiding principles can be used to aid in cloud-nimble adoption:

Drive adoptions with a focus on personas and their experiences, including the data and workflows that must support them:

The business organization and domain expertise must be involved in planning. An effective approach is to define comprehensive cloud adoption ‘portfolios’ that are a functioning set of domain workflows tied to user groups and work practices (including the personas and user experiences that must be supported), applications, interfaces, data, insights, security and infrastructure.

Design your move with innovation in mind:

Cloud adoption opens the door for innovation in leveraging the new platform effectively. Pre-move considerations:

Move considerations:

Post-move considerations:

A nimble subsurface example

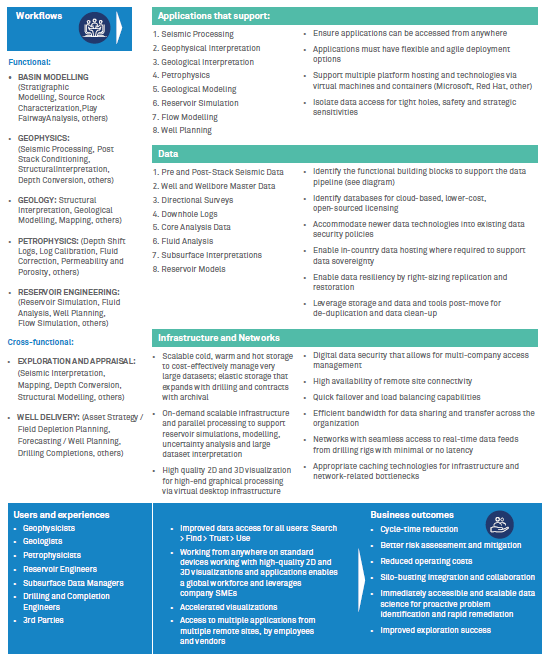

Using these two guiding principles, here is a hypothetical portfolio for a cloud move covering basin modelling, geophysics, geology and reservoir engineering workflows:

The illustration highlights the importance of maintaining the integrity of user experience and workflows, including cross-functional workflows.

Consider this common scenario in unconventional shale drilling. It is underpinned by the power of these cloud capabilities:

A company is engaged in drilling a new well. The complexity of the subsurface means that there are typically differences in the actual rocks that will only ever be known as the well is drilled. The geophysicists and geologists can then complete an update to their key control points in their interpretations. They have integrated and simultaneous access to all seismic and technical well data (1) and combine that with all available data from nearby wells (2) to develop a new 3D geological model. The reservoir engineer then takes the updated geological model and runs a fluid flow simulation analysis (3) that further highlights the reservoir sections where the maximum flow of hydrocarbons can be achieved. The integrated asset team then plans the best well path based on the enriched geological model.

However, the ideal well path is not always easy to drill, or in some cases is not technically possible to drill. So, the subsurface asset team then collaborates and iterates with the drilling and completions engineers to complete a viable engineered plan. They know that once drilling begins, it will be critical to minimize Non-Productive Time. To do this, the actual well must be drilled with the best possible track (4) through the reservoir and it must be done safely. Then drilling begins. This is the point at which the well plan and the interpretive subsurface models are truly tested. The asset team monitors and performs geosteering as needed, around-the-clock.

As is often the case, the team encounters significant differences mid-well. At that point, the integrated asset team and the drillers begin a virtual real-time collaboration (5) to adjust the models. All the workflows, data and new well data are harnessed (4). Within minutes they can re-run the fluid flow analysis to validate the maximum flow of hydrocarbons and then adjust the well paths.

After nimbleness, what?

Once a company has taken this step, what is next? Companies will be able to think even bigger. Nimble upstream businesses will be able to add in cloud-native services and applications. Over time, employees’ experiences with their new world will become natural, predictive and conversational. It means the wealth of knowledge living in the organization will begin to be curated, virtualized and contextualized.

The integration of data stores and organizations will enable new insight that was previously unachievable. Companies will be able to harness a diversity of perspectives and move closer to the ‘one team’ concept that many have tried to embrace in the industry. For example, the drilling and fracking of a complicated unconventional stacked pay formation would yield improved results if subsurface scientists, drilling engineers and completion engineers could collaborate perfectly while rapidly accessing all available optimally conditioned data.

Data processing, data volumes and diversity of data types will no longer be limited by cumbersome application boundaries and availability challenges. All organizations and technical functions would be able to mine all data related to all assets in real time.

Processing power and artificial intelligence for digitizing and interrogating hundreds of thousands of mud log and wireline log scanned images will find every indication of hydrocarbon shows to automatically, rapidly plot the points in a 3D geospatial portal. Key workflows will be data-driven and automated, removing unnecessary inefficiencies and associated risk. For example, in an unconventional asset team, a well factory might be delivering hundreds of wells a year using numerous drilling rigs. An orchestration engine could monitor target disparate systems for the information that a drilling permit form requires. As soon as data is detected, the form is automatically populated and dispatched to the appropriate regulatory body, without human intervention.

And it all starts with the cloud, that base for continuous transformation.

Azure capabilities are engineered for oil and gas

Data Neutrality

Field and production data and the algorithms that drive them are business differentiators and could mean losses in the range of billions of dollars if compromised or used for commercial purposes. To address the unique needs for data protection and sharing of the industry, Microsoft has announced a set of principles, and all Azure services are monitored for adherence.

Privacy and data protection are grounded in Microsoft’s commitment to organizations’ ownership of and control over their collection, use, and distribution of information. We strive to be transparent in our privacy practices, offering privacy choices, and responsibly managing the data we store and process. One measure of our commitment to the privacy of customer data is our adoption of the world’s first code of practice for cloud privacy, ISO/IEC 27018.

Azure meets a broad set of international and industry-specific compliance standards, such as General Data Protection Regulation (GDPR), ISO 27001, HIPAA, FedRAMP, SOC 1 and SOC 2, as well as country-specific standards, including Australia IRAP, UK G-Cloud, and Singapore MTCS. Rigorous third-party audits, such as those by the British Standards Institute, verify Azure’s adherence to the strict security controls these standards mandate.

Hybrid Capability

The nature of evaluation and exploration necessitates massive data and compute on premise as close as possible to the assets and in the cloud. Azure Stack and Edge capabilities are built for providing a common development and deployment platform for on-premise and cloud as well as tactical datacenters in geo-locked locations.

Co-Engineering with Strategic Customers and Partners

Microsoft, in its customer focused strategy, is working closely with many of our strategic customers on influencing and re-engineering our own products and services to meet the industry requirements. Our goal is to enable the specifics and the key requirements that differentiate our customers in leveraging cloud services for their own workflows. For example, some of the key design principles we applied to our newly deployed High Performance Computing offerings, Hb and Hc, were as a direct feedback from our large oil and gas customers. We’ve also worked closely with other customers in re-defining how we offered some of our services like IoT Hub to match the scale and volume expected from Oil & Gas customers and remove any limitations we had in the platform that slowed down our customer’s adoption and innovation. MSFT is committed to continue co-developing, co-innovating and co-engineering our products to match our customer expectations and future requirements as it’s an essential strategy for us to bring digital transformation to the Oil and Gas industry.

How to move forward

Wipro and Microsoft have formed a strategic partnership to provide solutions and services for running subsurface workflows on Azure Cloud and Intelligent Edge.

For more information:

https://azure.microsoft.com/en-us/industries/energy/

https://www.wipro.com/oil-and-gas/

Please reach out to your Microsoft or Wipro rep for a discovery workshop.

Reference

1 https://www.weforum.org/centre-for-the-fourth-industrial-revolution - World Economic Forum Centre for the Fourth Industrial Revolution

2 https://en.wikipedia.org/wiki/Drake_Well - the Drake Well was the first oil well drilled in Pennsylvania in 1859; 69.5ft deep

3 https://aoghs.org/offshore-history/offshore-oil-history/ - The first offshore well was drilled in 1896 off the coast of California – from an extended Pier in Santa Barbara

4 Schlumberger website (www.slb.com) – “The first well log was obtained in 1927 in Pechelbronn field in Alace, France. The tool, invented by Conrad and Marcel Schlumberger, measured electrical resistance of the earth.”

5 https://en.wikipedia.org/wiki/Hydraulic_fracturing - “Hydraulic fracturing began as an experiment in 1947, and the first commercially successful application followed in 1950.”

6 https://www.landmark.solutions/About - “Landmark broke ground again in 1989 with OpenWorks® project database, the first software framework integrating E&P applications and data.”

7 https://www.eia.gov/analysis/studies/usshalegas/pdf/usshaleplays.pdf - “The proliferation of activity into new shale plays has increased dry shale gas production in the United States from 1.0 trillion cubic feet in 2006 to 4.8 trillion cubic feet, or 23 percent of total U.S. dry natural gas production, in 2010. Wet shale gas reserves increased to about 60.64 trillion cubic feet by year-end 2009, when they comprised about 21 percent of overall U.S. natural gas reserves, now at the highest level since 1971. Oil production from shale plays, notably the Bakken Shale in North Dakota and Montana, has also grown rapidly in recent years.”

8 http://mem.lyellcollection.org/content/14/1/33 - a summary of the Beryl field

Wipro’s Energy vertical is recognized in the industry for deep domain depth in oil and gas, for upstream data management and for industry-contextual digital platforms. Upstream practitioners include geophysicists, geologists, petrophysicists, reservoir and production engineers, data management specialists, petrotechnical platforms architects and systems engineers. In 2019, Wipro is proud to be active within the Open Subsurface Data Universe™ Forum. Digital practitioners and domain specialists bring deep skills in cloud adoption and architectures that enable upstream businesses. Authors include Mahendra Ayyalasomayajula and Thothathri Gurunathan - Global Digital Solutions for the Energy Industry; Mark Wiseman, Paul Dejager, Graham Cain, Neil Varty, Utsav Upadhyaya, Avinash Darisa – Upstream Exploration Consulting.

Microsoft’s Energy vertical is empowering strategic customers and partners in the oil and gas industry to achieve more, building platform capabilities and Azure services to deliver the most demanding workloads on the Azure Cloud and Intelligent Edge. Team members include petro physicists, geologists, cloud and software engineers, data scientists and security professionals that engage with customer business representatives to identify use cases and help build cloud-based solutions. Microsoft works with industry working groups such as PPDM, Energistics, OPC Foundation, Open Process Automation Foundation and Open Subsurface Data Universe Forum. Authors include Kadri Umay, Hussein Shel, Oswaldo Villoria – Principal Program Manager, Oil and Gas, Jose Valls – CTO Oil and Gas Industry.