Less than a decade after breaking the Nazi encryption machine Enigma and helping the Allied Forces win World War II, mathematician Alan Turing changed history a second time with one simple question: "Can machines think?”

Artificial Intelligence (AI) has grown rapidly in the last decade. It is now poised to revolutionize the world by ushering in a new era of intelligent machines that can mimic human behavior and cognition, so much that Sundar Pichai (CEO, Alphabet) has famously termed AI to be more profound than the discovery of fire.

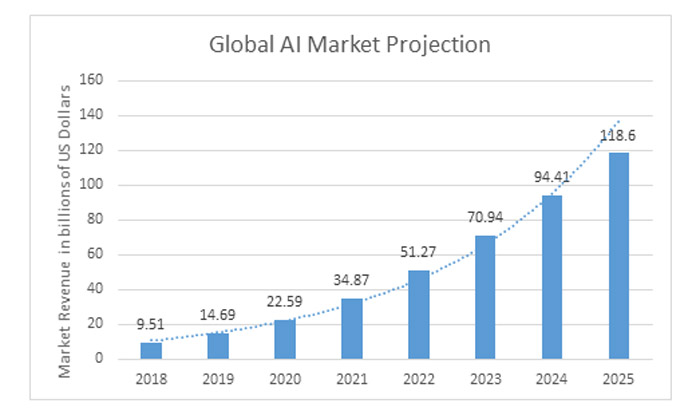

Businesses across sectors, including Automotive, Biosciences, Healthcare, and Finance among others, have been quick to adopt AI and integrate them as a vital part of their product roadmap, evidenced by the near exponential growth projections of the global AI market (Source: Statista).

Roadblocks to Applied AI - The Data Story

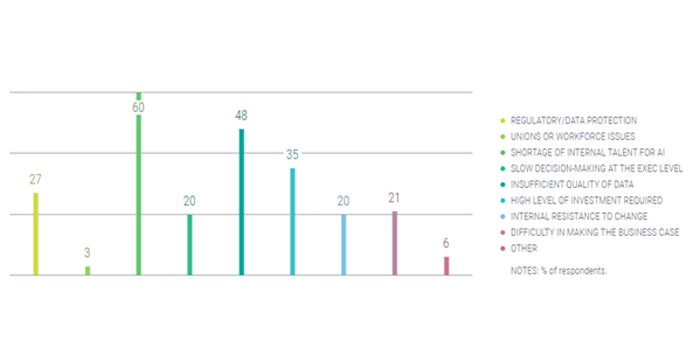

In a recent study conducted by MIT Technology Review Insights, senior executives working at midsize-to-large organizations around the world were surveyed regarding the application of AI in their organizations. They named the following as the top challenges they face in AI deployment –

It is interesting to note that the top three roadblocks to applied AI are interlinked via the data story. Companies across the world invest heavily in hiring AI Specialists, ML Engineers, Data Scientists, and Data Engineers (LinkedIn’s 2020 Emerging Jobs Report), but the process of data generation for these specialized professionals to work on is not as prominently discussed.

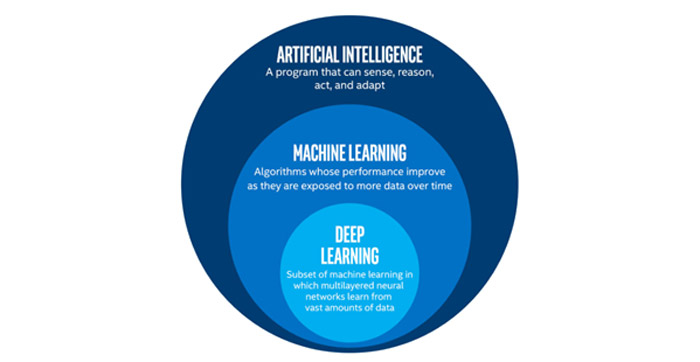

Improvements in Artificial Intelligence are fed by improved cognitive capabilities driven by Machine Learning; and, as is the case with all learning, Machine Learning demands high-quality data for algorithms to learn from.

It is common knowledge that the algorithms powering AI require huge amounts of data to learn continuously and take decisions mimicking humans, but the secret sauce behind every AI giant is the huge investment that has gone into continuous generation of high-quality training data for their machine-learning algorithms and datasets. The concept of Garbage In, Garbage Out becomes all the more relevant in the development of AI algorithms – better the quality of training data, better the performance of the algorithm.

Artificial Intelligence is a highly advanced field, but the process of building high-quality training data does not ask for the same skillset required to, say, make a self-driving car. It requires a more specialized and trained workforce that properly understands the project specifications and requirements to deliver customized ground-truth for specialized ML and AI applications – which also requires significant investment in terms of training time and money. Popular examples of Machine Learning datasets (continuing the Self-Driving Car example) include the MS Coco dataset and the KITTI Vision Benchmark suite. Companies need to think of AI and machine learning as the engines that will drive the amazing things they want to accomplish; like every engine, it needs the right fuel to run well.

Data Annotation – The Unsung Hero

Data Annotation (also known as Data Labelling) is the process of creating high-quality ground-truth data custom-made for training an ML or AI algorithm to make a wide range of decisions, ranging from object detection, classification, right to Self-Driving Cars, using state-of-the-art Computer Vision algorithms. The process generally involves human annotators working to label data across customized user-defined classes.

Data annotation is critical to ensuring that an Artificial Intelligence or Machine Learning project can scale. The process itself can be manual or partially automated (using more AI) – however, seldom can you completely remove the human involvement in creating training data during the initial stages of any project.

Data annotation can have many modalities depending on the format of the data and/or the application of the training data. Some popular modalities that have emerged include –

Text Annotation

Text annotation enables machines to understand text and the meaning behind the combination of words and sentences. Keywords in sentences are annotated, through which the algorithm is able to stitch together the big picture by making meaningful associations between keywords. Text annotation is of immense importance in Natural Language Processing (NLP).

Keypoint Annotation

In specific use-cases such as human pose estimation, just identifying and labelling the keypoints in the raw data serves the purpose better than other means of annotation. Here, only important features (landmarks) of an object are labelled to obtain an approximation of the features of the object.

Polyline Annotation

Polyline Annotation is a use case of annotation that is specific to the autonomous vehicle segment. Here, lanes are labelled to enable autonomous vehicles to detect drivable areas, and differentiate between lanes meant for trucks, cars, cyclists and so on.

Bounding Box Annotation

Bounding Box annotation involves the usage of tight 2D or 3D bounding boxes to outline and label objects per specific sets of stringent rules. The resulting training dataset is used to train algorithms in applications such as object detection and localization, among others.

Semantic Segmentation

Semantic Segmentation is the association of each pixel in an image with one of many user-defined classes. As the name suggests, segmentation splits up the image into different sectors that are easily identifiable by an algorithm. Continuing our reference to the self-driving car, semantic segmentation is commonly applied in distinguishing drivable regions (roads) from non-drivable regions (footpaths).

Video Annotation

Video Annotation enables object detection, localization, and tracking across frames, typically by making use of bounding boxes. Considering our use case of self-driving cars, AI algorithms can use this data to make informed navigation decisions taking into account the trajectory planning of any pedestrians in the vicinity.

3D Point Cloud Annotation

Point cloud annotation is a customized application of annotation where the input is the point cloud generated by LiDAR, RADAR etc. In our use case of a self-driving car, LiDAR and RADAR devices are mounted on top of the car. Point clouds thus generated are annotated with either bounding boxes or semantic segmentation.

Conclusion

Artificial Intelligence is the future of business. It will wrought changes beyond the most obvious, and companies that learn to harness its powers are going to be the ones that will survive the next industrial revolution. However, even the most technologically advanced algorithm will not be able to solve even basic problems without the right data - without the right volumes of high-quality training data. This the power of data annotation – it is nothing short of the driver of Industrial Revolution 4.0.

For more information, take a look at our webpage - https://www.wipro.com/engineering/annotation-services/

References:

https://www.statista.com/statistics/607716/worldwide-artificial-intelligence-market-revenues/

https://insights.techreview.com/live-ai-poll-key-stats/

https://blog.linkedin.com/2019/december/10/the-jobs-of-tomorrow-linkedins-2020-emerging-jobs-report

https://towardsdatascience.com/cousins-of-artificial-intelligence-dda4edc27b55

Kiran Kishore

Kiran is a Business Consultant for Wipro’s Autonomous Systems and Robotics practice. He leads product management for Wipro’s Auto Annotation Studio – a tool for data annotation and management, which he drives through consulting, go-to-market offerings, product development, and thought leadership initiatives. He holds an MBA from IIM Raipur and is passionate about the world of possibilities using autonomous mobility. He can be reached at kiran.kishore1@wipro.com.

Sreesankar R

Sreesankar is a Senior Architect in Wipro’s Autonomous Systems and Robotics practice team. He has over 19 years of experience in varied domains like Automotive, Embedded, and Securities. Currently, he leads the Wipro Auto Annotation Studio team, focusing on Computer Vision, Sensor fusion, Machine Learning, and Explainable AI, with the objective of managing and improving the AI system lifecycle. He can be reached at sree.sankar@wipro.com.