In Part 5, we introduced the infrastructure and operational discipline needed to scale a workforce of autonomous agents - the Agentic OS, MCP and A2A as standardised communication protocols, and Agentic Ops as the governance discipline that keeps the workforce aligned over time.

With that foundation in place, a harder question surfaces: how do you trust a system that acts autonomously on critical infrastructure, holds sensitive customer data, and makes decisions that directly affect service quality for millions of users? Autonomy is technically attainable. Trustworthy autonomy is a different challenge.

This part addresses the security, privacy, and governance guardrails that make autonomous operations safe to deploy at scale in a telco environment.

Zero Trust for Agents

In traditional network operations, the primary security concern is human operators - controlling who can access what, and ensuring changes are authorised. In an agentic environment, the agent workforce itself becomes an equally important security surface. A misconfigured, over-privileged, or compromised agent - whether internal or from a partner ecosystem - can cause as much damage as a malicious human actor.

The response is to apply the same Zero Trust principle to agents that modern security frameworks apply to users: treat every agent as untrusted by default, verify every interaction, and grant only the minimum privilege needed for each specific task.

Tool Isolation: Agents cannot execute arbitrary commands on the network. They can only invoke pre-approved tools through governed interfaces. This boundary is critical: even if the language model layer of an agent produces an incorrect or hallucinated conclusion, it is constrained to requesting documented tools rather than inventing dangerous commands. The intelligence layer and the execution layer are deliberately separated.

Semantic Verification via A2A: In a multi-partner ecosystem - where a vendor's agent may pass findings to an operator's RCA Agent - every inter-agent message is authenticated and integrity-verified before it enters the decision loop. A corrupted or spoofed message from a partner agent cannot inject invalid context into the reasoning chain.

Schema Enforcement: Every tool and data source exposes a strict input schema. Malformed or out-of-policy inputs are rejected at the interface layer, reducing the risk of injection attacks or unintended privilege escalation.

Agent Identity and Role-Based Access Control

A Governance Agent manages the identity, authentication, and authorisation of every agent in the workforce. Every agent has a unique, verifiable identity and operates under Role-Based Access Control (RBAC).

In practice this means that a RAN Optimisation Agent has read and write access within the RAN domain but no direct access to core network functions or raw customer data. The Change Management Agent can query the TKG and traverse the NKG but cannot execute a rollback without passing through the Policy Guardrail introduced in Part 4. Boundaries are explicit, enforced, and auditable - not assumed.

The Guardrail Framework

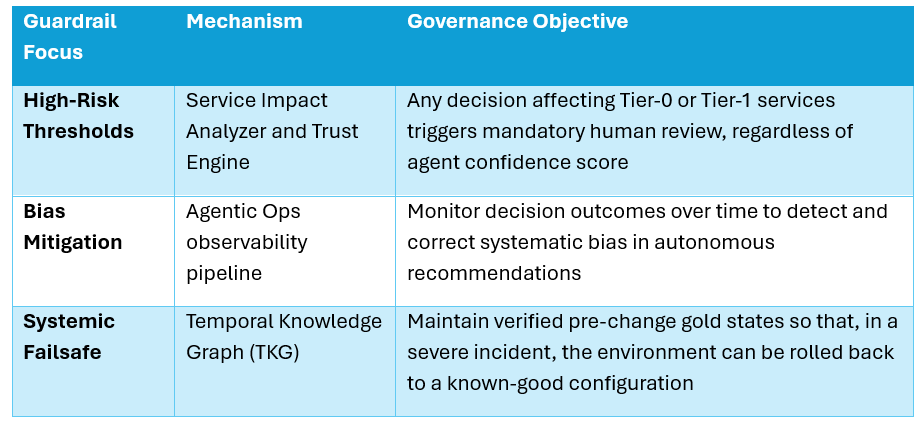

Beyond Zero Trust and RBAC, agent reasoning is bounded by a set of operational guardrails that govern when agents can act autonomously and when human judgment is required:

Operations Guardrail: Every agent conclusion carries a confidence score. If that score falls below a defined threshold - meaning the agent is not sufficiently certain about its diagnosis or recommendation - the workflow is automatically paused and a human engineer is notified. The agent does not guess when the stakes are high.

Safety Guardrail: Before any remediation action is executed, it is validated against a Digital Twin simulation of the network. If the simulation indicates that the proposed action could cause adverse outcomes - affecting services or customers beyond the intended scope - the action is blocked from proceeding. This is particularly important for changes that touch shared infrastructure serving multiple network slices or customer segments.

Legal Guardrail: Every agent decision is accompanied by a Chain of Thought log - a step-by-step record of the reasoning that produced it, anchored in NKG graph paths and TKG timestamps. This log is immutable and forms the basis for audit, regulatory compliance, and explainability. As regulators turn increasing attention to AI accountability in critical infrastructure, this traceability is not optional.

Data Privacy and Sensitive Information

Telcos hold large volumes of Personally Identifiable Information (PII) - customer identities, usage patterns, location data, and service history. Agentic systems must be designed from the ground up to minimise exposure of this data, even as they reason over it.

Data Minimisation in the NKG: The NKG stores only the metadata and relationships needed for causal reasoning - not full customer records. Customer identities remain in dedicated BSS and CRM systems. The NKG works with anonymised or pseudonymised identifiers, meaning an agent can determine that "a high-value customer segment is affected by this service path" without ever accessing the underlying personal data.

Privacy-Preserving Model Training: The language and domain models that agents depend on are trained on sanitised, anonymised operational logs. Where deeper fine-tuning on sensitive data is genuinely required, techniques such as federated learning reduce data exposure by training models locally rather than centralising sensitive information.

Output Masking: Every agent-generated output - alerts, diagnoses, audit entries, and reasoning traces - passes through a masking layer before being surfaced in logs or dashboards. Credentials, raw customer identifiers, and other sensitive fields are stripped at the output boundary, ensuring that the observability layer does not inadvertently become a data exposure risk.

Ethical Guardrails and Systemic Safety

Security and privacy address known risks. Ethical guardrails address a subtler category: the risk that agents develop systematic patterns of behaviour that are technically within policy but operationally or ethically problematic.