The vision of the Dark NOC or a fully autonomous operations center is often sold on the promise of speed. For critical infrastructure, the true currency is trust. As Communications Service Providers (CSPs) move toward TM Forum’s Autonomous Networks Level 5, the primary barrier to adoption is no longer the intelligence of the AI. It is the confidence of the operator. Gartner forecasts that by 2028, agentic AI will enable at least 15% of day-to-day work decisions to be made autonomously. In a Tier-1 network carrying life-critical traffic, deploying Agentic AI that perceives, plans, and acts autonomously represents a profound operational risk, unless it is wrapped in a rigorous architecture of governance and control.

The Governance Gap: Why Agents Are Different

To understand the governance challenge, we must understand the nature of an agent. A traditional script is predictable because it is rigid and follows a fixed procedure. An autonomous agent is goal-oriented.

When an agent observes a KPI drift, it creates a dynamic plan rather than executing a pre-written line of code. It decomposes the problem, decides which tools to use, such as querying inventory or checking change logs, and reasons over the data to form a conclusion. This loop of perception → planning → reasoning → tool use enables the system to handle unforeseen scenarios. It also introduces non-determinism. We must strictly bound what the agent is allowed to do if we cannot predict exactly how it will solve a problem.

The Trust Layer: Enforcing Determinism

The solution lies in a ‘Trust Layer’ that wraps the agentic workforce in immutable guardrails. This layer fuses the generative power of Large Language Models (LLMs) with the deterministic rigidity of knowledge graphs to ensure safety. Autonomous agents are prevented from making unsafe decisions using two core mechanisms:

- Verified toolsets and deterministic guardrails

An agent in a Dark NOC cannot be allowed to hallucinate commands. It must be restricted to a ‘verified toolset’ containing a strict whitelist of approved API calls and actions. Even if an agent decides a configuration change is necessary, the Trust Layer enforces hard limits on parameters and operational windows. This ensures that probabilistic reasoning never results in unsafe actions. - Semantic traceability

Trust requires proof. In a governed ANO architecture, every decision must be backed by a ‘reasoning trace’ anchored in the Network Knowledge Graph (NKG) and Temporal Knowledge Graph (TKG). When an agent recommends a rollback, it provides a citation pointing to the specific node in the topology and the specific timestamped change event that caused the fault. This allows human engineers to audit the decision instantly and transforms the AI into a transparent analyst.

Agentic Ops: Operating the Digital Workforce

Deploying this technology requires a shift from managing software to managing a workforce. Deploying this technology shifts the focus from managing software to orchestrating a workforce, bringing it under the domain of Agentic Ops (Agent OAM). This requires an Agentic OS architecture to govern agent identity, state, and lifecycle, which includes:

Identity and RBAC: A "Service Impact Agent" must have different permissions than a "Network Optimizer." Role-Based Access Control (RBAC) must be enforced at the agent level to prevent unauthorized access to core elements.

Lifecycle management: Operators need mechanisms to version-control agent behaviors, roll back underperforming agents, and persist state during hand-offs.

Observability: The health of the digital workforce must be monitored through metrics like decision latency, reasoning confidence distribution, and the rate of human override.

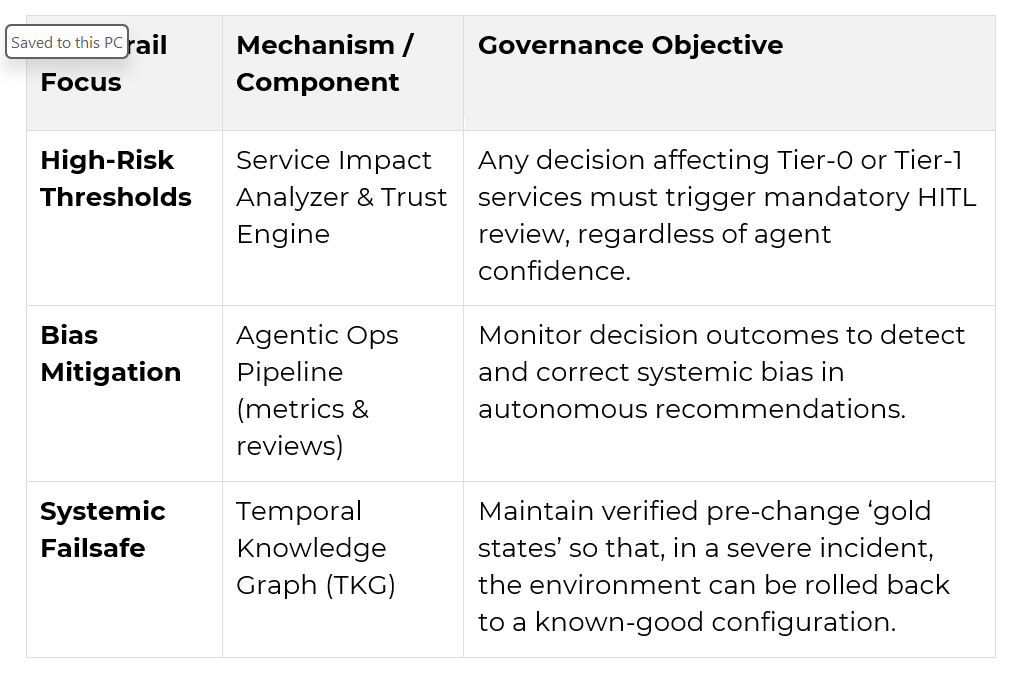

To operationalize these concepts, the architecture enforces specific governance objectives across the lifecycle: