Clinicians and AI: Co-Collaborators in Patient Care

The next era of healthcare leadership will belong to those who can orchestrate collaboration between clinicians and intelligent systems. The question is no longer if AI belongs in care—it’s who will lead the partnership between humans and machines.

The Leadership Challenge: Knowledge Is Outpacing Humans

Medical knowledge once doubled over decades. By 1980, it doubled every seven years. By 2010, every 3.5 years. And now, just 73 days. Or maybe less.

The implication is uncomfortable but unavoidable: no clinician, no matter how experienced, can stay current alone.

And it is why the next generation of medical devices cannot behave like tools but must behave like collaborators. We are seeing evidence of that happening already. Adoption of AI is now considered core to operations by 94% of clinicians, researchers and executives!

The Medical Device Transformation

For healthcare organizations, the transformation of medical devices to care-collaborators is not about technology—it’s about redefining how intelligence flows through the enterprise.

What were once static instruments, medical devices are now becoming AI‑powered, hyperconnected systems capable of learning and improving over time. Nowhere is this shift more apparent than in diagnostics, where speed, accuracy, and context directly affect outcomes.

Software‑defined platforms are replacing fixed‑function hardware, enabling continuous updates, system‑level intelligence, and coordinated learning across fleets of devices. This is not incremental innovation—it’s a re‑architecture of how care intelligence is created and shared.

From Tools to Intelligent Partners

Imagine every device in your network not just reporting data but learning from every clinician interaction.

That’s the new frontier of operational intelligence.

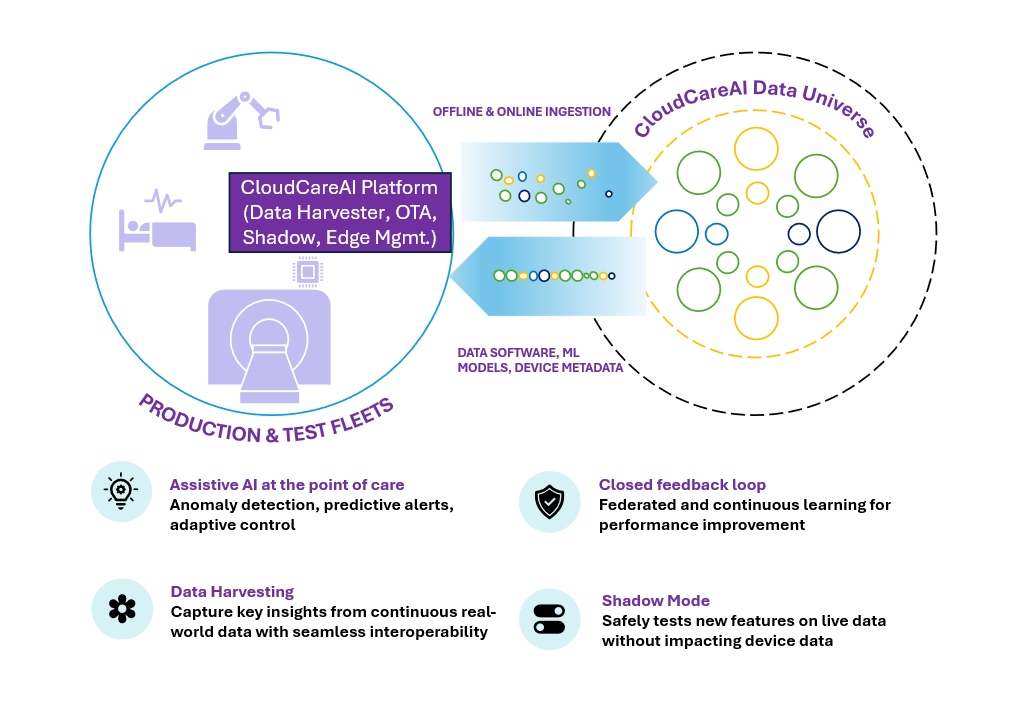

Platforms such as Wipro’s CloudCareAI enable this shift by transforming edge-devices into scalable, future‑ready systems where clinicians, engineers, and intelligent machines work together to deliver care.

At the edge, devices can now run deep neural networks, SLMs, and VLMs enabling sophisticated inference directly within hospitals, clinics, ambulances, and remote care settings.

NVIDIA Edge Architecture in Real‑Time Clinical Workflows

NVIDIA edge computing platforms—such as NVIDIA Jetson Orin, NVIDIA IGX and Jetson Thor—are designed to power real‑time AI reasoning and decision support within clinical environments. These architectures enable continuous data streams from medical imaging devices, patient monitors, and diagnostic sensors to be processed locally, ensuring sub‑second inference and response times.

In emergency rooms, operating theaters, and ambulances, this means AI models can analyze imaging scans, detect anomalies, and provide contextual alerts instantly—without waiting for cloud connectivity, across multiple modalities.

By embedding NVIDIA AI infrastructure modules directly into medical device / edge and hospital networks, healthcare organizations can achieve low‑latency, high‑reliability AI workflows that respect patient privacy while maintaining clinical speed and accuracy. This transforms edge intelligence from a technical capability into a real‑time clinical partner—augmenting human expertise exactly where and when it matters most.

Remote Diagnostics with Quantized Vision-Language Models

Not every clinical moment happens in a well-equipped hospital. Rural clinics, ambulances, home monitoring systems, and telemedicine endpoints demand diagnostic intelligence that works reliably without cloud connectivity — and often without a specialist in the room.

This is where quantized Vision-Language Models (VLMs) deployed at the edge change the equation entirely. Quantization compresses model size and computational overhead while preserving clinical inference quality, allowing sophisticated multimodal AI to run on compact embedded hardware. NVIDIA Thor’s edge architecture — designed for exactly this constraint — brings GPU-accelerated AI compute into a form factor that fits naturally into mobile and remote care environments. The result is a VLM capable of interpreting medical images, video streams, and sensor data in real time, no cloud round-trip required.

What makes this clinically meaningful is not just speed — it is interpretability. The model does not return opaque predictions. It identifies the most likely condition, assigns a graded severity level, and generates a natural-language explanation of its reasoning — referencing the specific visual patterns, structural irregularities, or physiological deviations that informed its output. Clinicians can see not just what the model flagged, but where and why.