In Part 3, we walked through the midnight maintenance scenario - an aggregation router upgrade that caused KPI dips ten minutes after the MOP completed. We saw three agents work in sequence: the KPI Drift Monitor flagging the deviation, the Change Management Agent resolving the identity gap, and the RCA Agent validating the causal path through the NKG.

But we treated those agents as a black box. We showed what they did, not how they actually work. That is what this part addresses.

The question is worth asking carefully: what makes an autonomous agent different from a sophisticated script? The answer lies in two things working together - a reasoning engine that constructs plans rather than following fixed procedures, and a trust layer that ensures every action stays within defined operational boundaries. Get either of these wrong and you either have a system that cannot handle novel situations, or one that cannot be safely deployed in a production network.

The Dual-Core Design

Every agent in the system has two cores working in parallel: an Intelligence Core that reasons over goals, and a Trust Layer that governs what actions are permissible. Neither works without the other.

Think of it this way: the Intelligence Core determines what should be done. The Trust Layer determines what can be done. The intersection of these two is where autonomous action becomes safe enough to deploy on live networks.

The Intelligence Core: Reasoning Over Goals, Not Procedures

Traditional automation follows a script. If condition A, then action B. This works well for known, predictable situations - and breaks the moment something falls outside the script's assumptions.

An autonomous agent works differently. Rather than following a fixed procedure, it constructs a plan based on the current situation and the goal it has been given. In the midnight scenario, the KPI Drift Monitor was not told "if latency rises on Router-A, send an alert." It was given a goal - detect meaningful KPI drift and determine whether it warrants escalation - and it built a plan to achieve that goal based on what it observed.

The reasoning loop that makes this possible has four stages:

Perception: The agent ingests live data from the monitoring layer - KPI streams, fault indicators, anomaly signals - and enriches this with context from the NKG (what does the topology look like around the affected element?) and the TKG (what changed recently, and when?). This is not passive data collection. The agent is actively building a situational picture.

Planning: The agent decomposes its goal into a sequence of sub-tasks. In the midnight scenario this looked like: query the TKG for recent changes in the relevant time window; traverse the NKG to identify which services and customers depend on the affected element; compare current KPI behaviour against historical baselines; determine whether the deviation is localized or spreading. The plan is constructed dynamically based on what the agent finds at each step.

Reasoning: At the core of every agent is a reasoning model - a combination of language models and domain-specific models - that interprets what the data means, identifies the most likely root cause, and determines the appropriate response. This is where the agent moves from observation to conclusion.

Tool Use: The agent does not act directly on the network. It identifies which tools it needs - an API call to the inventory system, a query to the performance analytics engine, a configuration diff comparison - and invokes them through governed interfaces. The tools are the hands. The reasoning engine is the brain.

The Fusion of AI Paradigms: Why Three Layers Are Needed

One of the most important architectural decisions in building these agents is the combination of three distinct AI paradigms. Each has a role that the others cannot fill.

Generative AI - the Language Understanding Layer: Large and small language models (LLMs and SLMs) interpret unstructured data - MOP documents, change tickets, field reports, vendor manuals, troubleshooting guides, network configuration files. In the midnight scenario, the Change Management Agent needed to understand what the MOP actually described before it could reason about its impact. No deterministic system can read a MOP document and extract operational intent. Generative AI can.

Symbolic Reasoning - the Deterministic Layer: For diagnostic conclusions that will drive real network actions, probabilistic reasoning alone is not sufficient. The agent switches to deterministic logic - traversing the NKG to establish topological facts, querying the TKG to verify causal relationships between timestamped events. This is what allows the system to present engineers with verifiable proof rather than a confidence score. When the RCA Agent concludes that the aggregation router upgrade caused the KPI dips, that conclusion is anchored in graph traversal, not inference.

Purpose-Built Network Models - the Domain Intelligence Layer: General-purpose AI models do not understand how telecom networks behave. This third layer adds domain-specific intelligence: topology-aware graph models that understand network dependencies, temporal models trained on historical KPI patterns to distinguish genuine drift from normal variation, forecasting models for early degradation prediction, and edge-efficient SLMs optimised for interpreting vendor-specific logs and standard operating procedures. Together, these give the agent the operational fluency needed to make decisions that a generic AI system simply could not.

Agent Archetypes: Two Examples

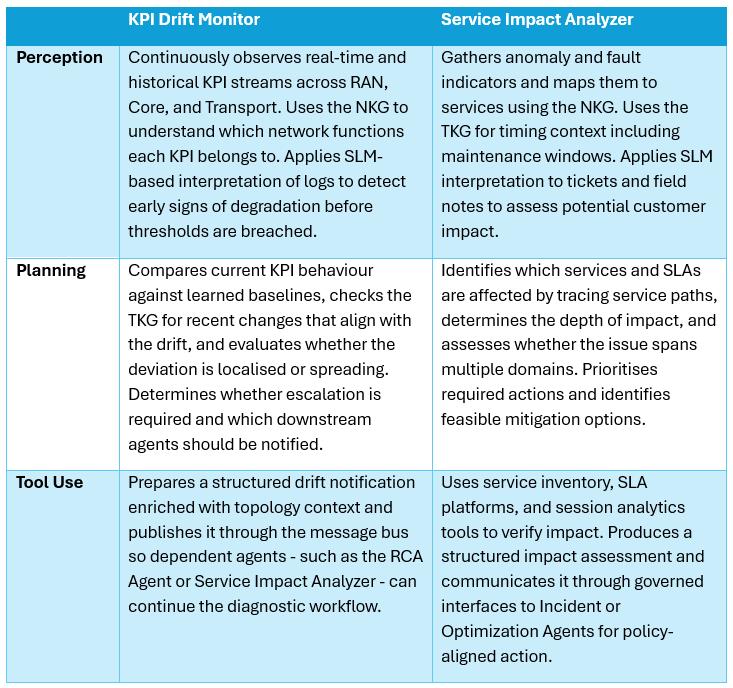

The following table illustrates how this architecture plays out in two specific agents. These are not hypothetical - both were in action during the midnight scenario in Part 3.