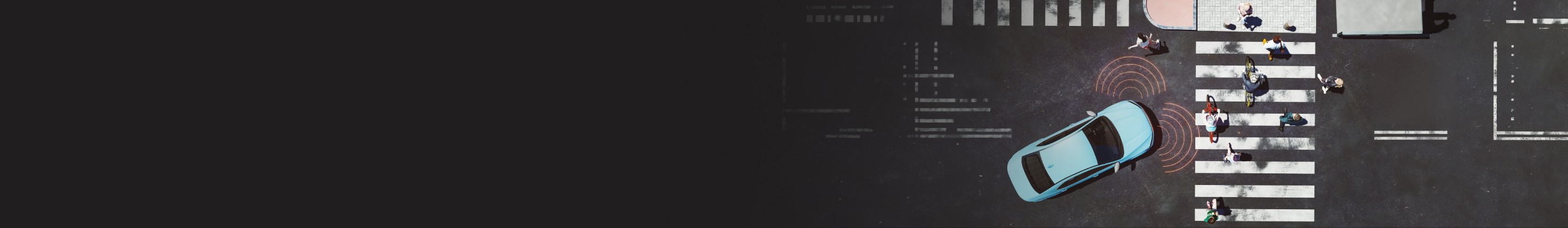

Autonomous mobility is transitioning from experimentation to a results driven era, where success is defined by the ability to deliver at scale with real-world safety, operational efficiency and regulatory alignment. Over the past decade, autonomous test fleets have logged hundreds of millions of miles across diverse cities and environments. In doing so, the industry has uncovered a critical insight: scaling autonomy is not limited by algorithms or sensors, but by the ability to validate perception, manage data quality, and prove safety consistently.

According to a McKinsey report, timelines for widespread Level 4 autonomy have adjusted to 2032, signaling a more pragmatic emphasis on readiness and risk mitigation. Competitive differentiation is increasingly driven by software maturity, data management discipline, and validation rigor. At the same time, the economic viability of shared autonomous fleets depends on automation and standardization. These outcomes are only achievable when perception systems are trained on accurate, verifiable, and auditable data.

Within this context, LiDAR‑powered AI‑enabled annotation is emerging as a strategic enabler by linking perception quality directly to time‑to‑market, cost efficiency and the scalability of autonomous mobility programs.